The human eye as a proxy for the future of networking

Thu, 24th Oct 2019

It was once thought that the eye was a 'dumb' sensor, sending data along the optic nerve to the brain, which contained all the visual 'processing' capabilities.

But in recent years scientists have been unravelling the intricate structure and wiring of retina neurons within the eye.

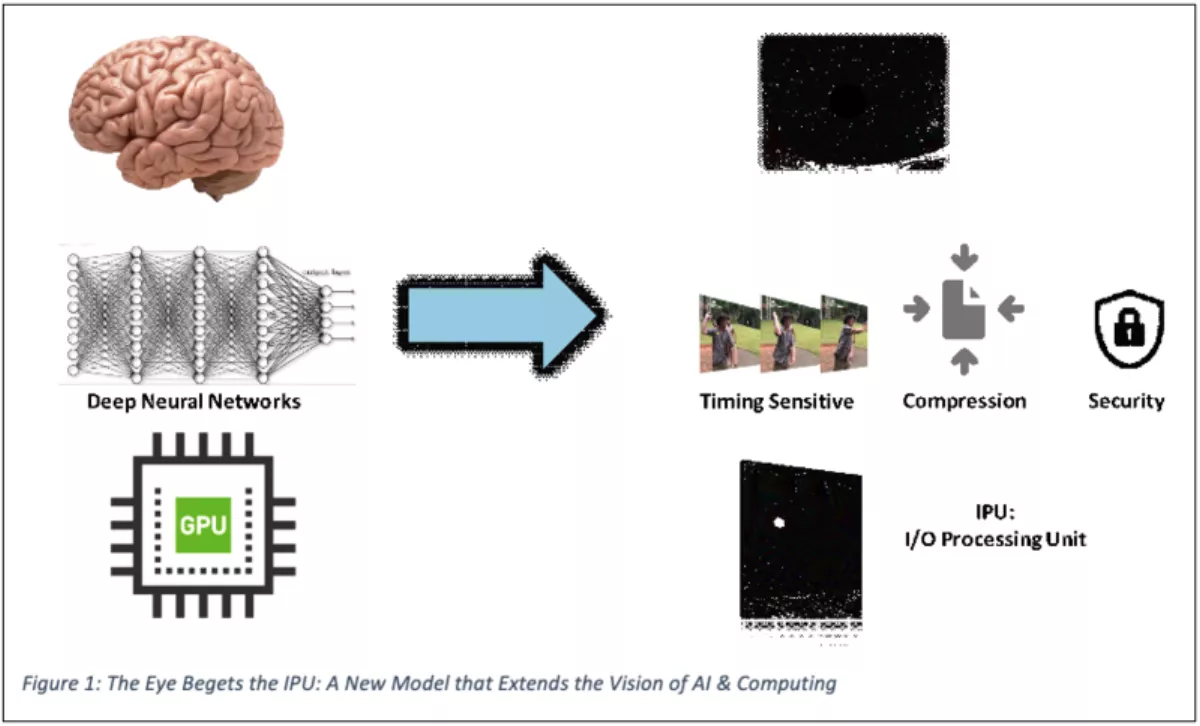

Just as studies of the brain have done much to inform modern Artificial Intelligence (AI), so have these discoveries about the visual system suggested an interesting framework to guide future development of similarly complex processing tasks in today's highly specialised, distributed and interconnected data centers.

The neural networks of the brain provided inspiration for new algorithms that have sparked the artificial intelligence (AI) and machine learning (ML) revolution.

In the last decade, incredible progress has been made with programmable GPUs that are now able to solve incredibly challenging speech and image recognition problems with human-like performance.

The extended neural networks of the eye provide a new model for the extension of these AI and ML algorithms, towards a more distributed computing paradigm that includes smart networking devices at the edge.

And just as the GPU was able to implement the deep neural networks of the brain, the eye inspires a new type of processor – the I/O Processing Unit (IPU) that combines the ability to perform eye-like remote processing and consolidate information to be efficiently transported to a centralised data centers for additional computation.

The visual system of living organisms

There are more than 50 different types of neurons in the retina of the eye all connected in a precise way to form specific sub-structures that pre-process critical data flows.

For example, the ability to detect movement has vital implications for survival – especially if a missile is hurled straight at your face.

An object getting bigger suggests movement and, if it is getting bigger with no significant lateral movement, then it is likely to hit you.

This information demands very rapid responses – even bypassing the normal visual processing in the brain – to trigger a ducking reaction.

Even if it turns out to be false data, at least a possible disaster has been avoided.

How does all this beautiful intricacy within the retina, contribute to visual functionality?

There are many different retinal sub-structures, each computing something specific and critical about the visual scene before the information is conveyed via the ganglia through the optical nerve to the brain.

The retina actually performs many different types of processing able to detect different aspects of the visible scene and convey consolidated information of various modalities to the brain:

- Temporal – Even faint blinking of light against a strong background

- Spatial – Pattern detection, particularly of bilateral symmetry

- Kinematic – Detect object motion

The temporal sensitivity here is astounding - with even single-photon detection capabilities possible, when periodic but at the right speed.

Neither to fast nor to slow.

New models for data center and edge processing and connectivity

Today's more advanced data centers are developing along lines similar to the HVS's distributed intelligence – using SmartNICs and I/O Processing Units (IPUs) with built-in processors to allow important decisions to be made at the edge, in real-time.

So just as the eye has temporal, spatial, and motile detection modalities an IPU-based SmartNIC or controller can provide detailed information about the timing, location, and flow of information.

Similarly, an IPU can automatically tag where information is coming from, map to the right virtual network to transport where it needs to go. And then on receipt, route it to the exact right location in a specific virtual machine's memory.

But IPUs can even do more – they can deal with time-sensitive data, compress it so that less bandwidth is needed to move to a centralised location, and accelerate functions like storage and security.

Consider cybersecurity: in a cloud-connected world with BYOD and wireless connectivity, we can no longer define a clear network perimeter and defend it with firewalls.

We also need an ability to detect possible malware, or simply anomalous behaviour, anywhere in the network.

An intelligent I/O Processing Unit based SmartNIC can detect such anomalies on the spot and take immediate mitigation measures such as blocking or diverting a data flow instead of sending it via the network to a centralised compute resource for analysis.

Today's "hyperscale" data centers – the sort of system powering Internet giants like Amazon Web Services, Facebook, Microsoft and Alibaba – contain hundreds of thousands of powerful servers connected by thousands of miles of network cable.

Google is estimated to have 900,000 servers across its 13 data centers worldwide, using enough electricity to power 200,000 homes.

The question is: how best to integrate a vast and complex set of parts into one highly functional and efficient whole?

As we see with the human brain, there is an evolutionary advantage in distributing critical intelligence into the system rather than sending every signal to the brain for processing.

So the move is to make the network itself more intelligent, by installing smart network cards that not only respond to normal operating conditions in a more efficient, seamless manner, but can also respond rapidly to anomalous or potential dangerous activity in the network – and take action without waiting for instructions from the centralised intelligence of a traditional data center.

Where is this taking us, other than towards more efficient, self-healing data centers?

New developments in automation and machine learning reflect the evolutionary and emergent processes that have made our visual senses so smart.

Notably, the rise of "intent based" networks, able to realise higher level business intentions – like automatically recognising and nullifying cyber-attacks, or working around failed systems – rather than requiring a team of technicians to laboriously re-program every single element of the system.