NVIDIA unveils its first GPU featuring Ampere architecture

Fri, 15th May 2020

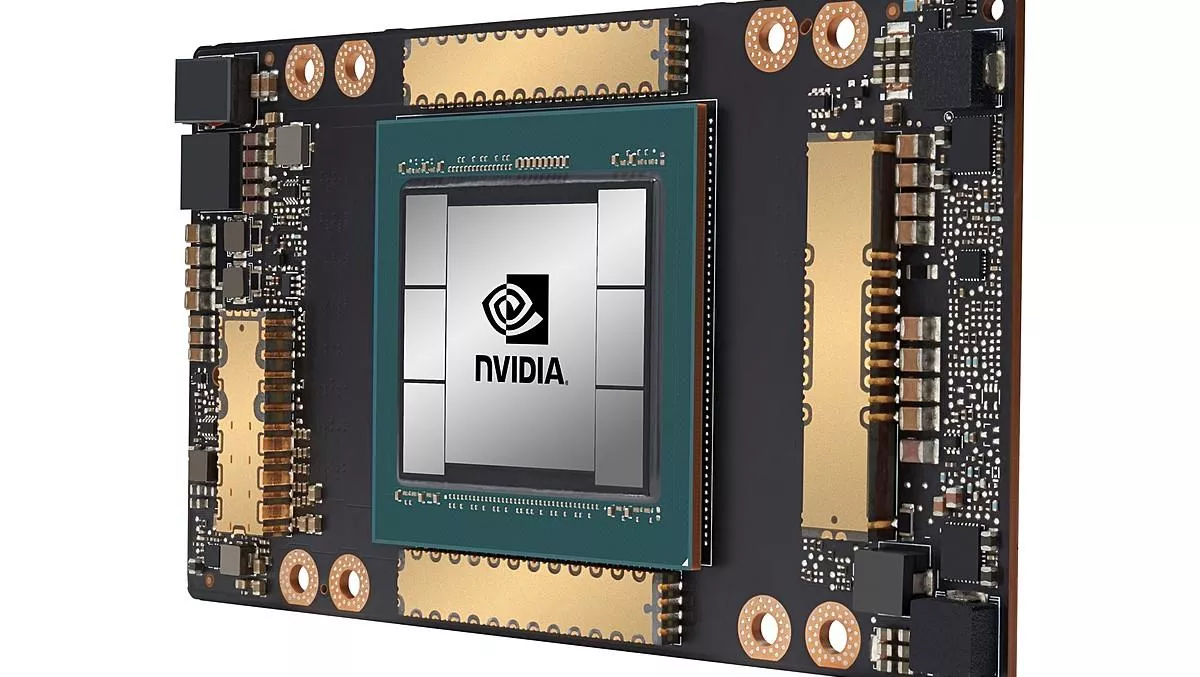

NVIDIA has today announced that the very first GPU based on the company's Ampere architecture is generally available and shipping to customers worldwide.

The GPU, called NVIDIA A100, unifies AI training and boasts a performance level up to 20 times more powerful than its predecessors.

It comes as the world's demand for data sees an unprecedented surge as people from across the globe stay home, relying on tools powered by cloud.

The NVIDIA A100 contains multi-instance GPU capability, allowing for it to be partitioned into as many as seven independent instances for inferencing tasks, while third-generation NVIDIA NVLink interconnect technology allows multiple A100 GPUs to operate as one giant GPU for ever-larger training tasks.

NVIDIA says almost all major cloud providers expect to incorporate the GPU into the offerings, including Azure, AWS, Google Cloud, Alibaba Cloud, Oracle, and more.

A universal workload accelerator, the A100 is also built for data analytics, scientific computing and cloud graphics.

"The powerful trends of cloud computing and AI are driving a tectonic shift in data center designs so that what was once a sea of CPU-only servers is now GPU-accelerated computing," says NVIDIA founder and CEO Jensen Huang.

"NVIDIA A100 GPU is a 20x AI performance leap and an end-to-end machine learning accelerator — from data analytics to training to inference.

"[It] will simultaneously boost throughput and drive down the cost of data centers."

NVIDIA says its newest GPU proves its innovation in 5 key breakthroughs. They are:

- NVIDIA Ampere architecture — At the heart of A100 is the NVIDIA Ampere GPU architecture, which contains more than 54 billion transistors, making it the world's largest 7-nanometer processor.

- Third-generation Tensor Cores with TF32 — The Tensor Cores are now more flexible, faster and easier to use. Their expanded capabilities include new TF32 for AI, which allows for up to 20x the AI performance of FP32 precision, without any code changes. Tensor Cores also now support FP64, delivering up to 2.5x more compute than the previous generation for HPC applications.

- Multi-instance GPU — MIG, a new technical feature, enables a single A100 GPU to be partitioned into as many as seven separate GPUs so it can deliver varying degrees of compute for jobs of different sizes, providing optimal utilisation and maximising return on investment.

- Third-generation NVIDIA NVLink — Doubles the high-speed connectivity between GPUs to provide efficient performance scaling in a server.

- Structural sparsity — This new efficiency technique harnesses the inherently sparse nature of AI math to double performance.

Microsoft will be one of the first companies to take advantage of the A100, using it to enable better training and bolster Azure's performance and scalability.

"Microsoft trained Turing Natural Language Generation, the largest language model in the world, at scale using the current generation of NVIDIA GPUs," says Microsoft Corp corporate vice president Mikhail Parakhin.

"Azure will enable training of dramatically bigger AI models using NVIDIA's new generation of A100 GPUs to push the state of the art on language, speech, vision and multi-modality.