Nvidia has outlined a plan for virtualised game development that moves key production work from desk-based workstations to centralised GPU infrastructure in the data centre.

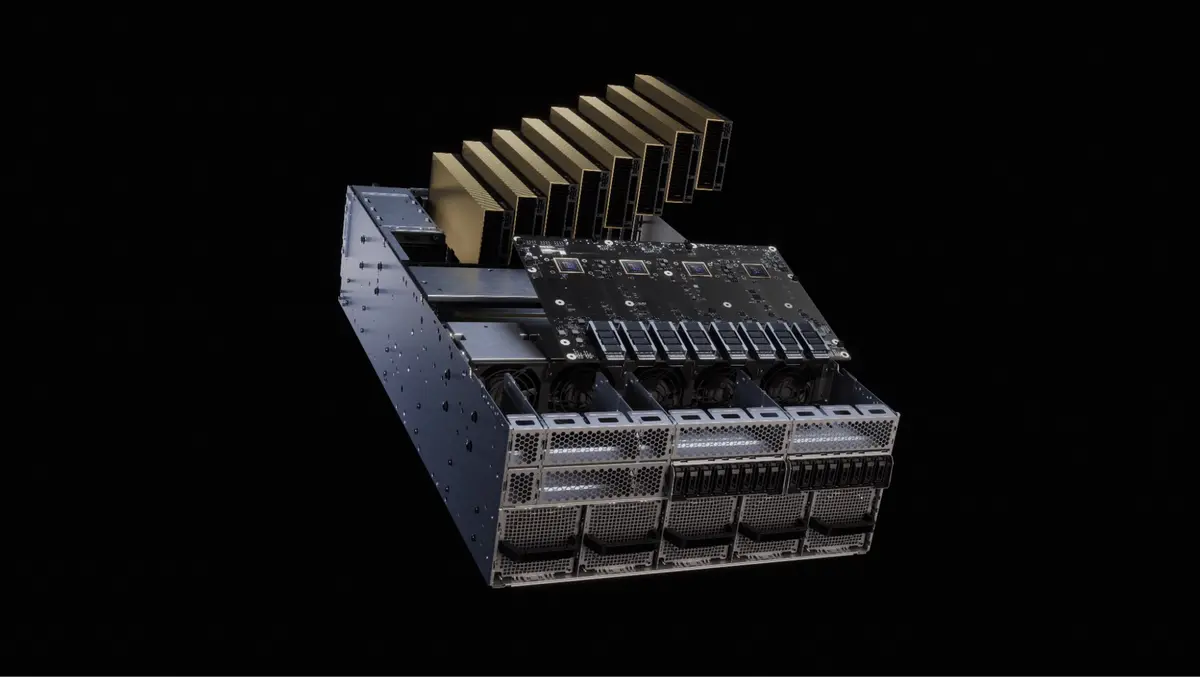

The approach centres on RTX PRO Servers built with RTX PRO 6000 Blackwell Server Edition GPUs and Nvidia vGPU software. Nvidia presented the concept at the Game Developers Conference in San Francisco as a way for studios to run creative production, engineering, AI work and quality assurance on shared infrastructure.

Game studios have expanded the scale of worlds, assets and tooling in recent years. Many teams also work across multiple sites, often with contractors and external partners. Despite those changes, much day-to-day production still relies on fixed workstations that can be difficult to scale and standardise across locations.

Centralised compute

RTX PRO Server is positioned as an alternative to adding more individual workstations. It pools GPU resources and allocates them to teams and tasks as needed, aiming to reduce downtime when some machines sit idle while others wait for access to suitable hardware.

Quality assurance is a common pressure point, as capacity can be hard to expand quickly during testing and release cycles. Problems also arise when hardware, drivers and tools vary between teams, complicating bug reproduction and slowing validation-especially when development and QA are split across sites.

Nvidia also argues that AI workloads in game studios often run on separate infrastructure, adding operational overhead and increasing the number of systems IT teams must manage.

Under the RTX PRO Server model, studios would virtualise workflows for creative, engineering, AI research and QA teams on the same platform. Nvidia says the setup maintains the responsiveness and visual fidelity expected from workstation-class systems while improving utilisation, scalability, data security and operational consistency.

Shared workflows

Nvidia outlined four main user groups for the virtualised setup. Artists would use virtual RTX workstations for 3D graphics and generative AI content creation. Developers would work in standardised engineering environments for coding and 3D development. AI researchers would receive larger-memory GPU profiles for fine-tuning, inference and AI agent work. QA teams would run validation and performance testing on the Blackwell architecture, which also underpins GeForce RTX 50 Series GPUs.

A key operational goal is to run different classes of workload on the same infrastructure throughout the day. Studios could schedule AI training, simulation and automation overnight, then reassign the same GPU resources to interactive development during working hours. The time-sharing model targets higher utilisation and less idle capacity.

Blackwell features

At the centre of the pitch is the RTX PRO 6000 Blackwell Server Edition GPU, which Nvidia says includes a 96GB memory buffer. The company says this lets developers run multiple demanding applications at once and perform AI inference on larger models alongside real-time graphics work.

The platform also uses Nvidia Multi-Instance GPU technology, which partitions a single GPU into isolated instances with dedicated memory, compute and cache resources. This is paired with vGPU software that supports virtual GPU profiles delivered through virtualisation platforms.

Nvidia says a single RTX PRO 6000 Blackwell Server Edition GPU can support up to 48 concurrent users in combined MIG and vGPU configurations. It presented this as a way to allocate capacity securely across users and workloads while keeping performance isolated.

Deployment model

RTX PRO Servers are pitched for data-centre operations and managed IT environments. Studios can deploy virtual workstations via Nvidia vGPU on supported hypervisors and remote workstation platforms. This fits established infrastructure and IT operating practices, rather than requiring bespoke workstation roll-outs in each location.

Nvidia also pointed to existing adoption of vGPU in the games sector, saying major publishers already use the technology to centralise development infrastructure and improve efficiency at scale.

"Game development teams are working across larger worlds, more complex pipelines and more distributed teams than ever," said Paul Logan, Nvidia.